Building AI-Enabled Engineering, Product & Design Teams in 2026

This article shares practical lessons from building Forgent AI's engineering organisation around autonomous coding agents, covering what changed when AI crossed a capability threshold in early 2026.

Leonard Wossnig

Co-Founder and CTO

Since January 2026, I've watched individual engineers on our team ship multiple features in a single week that would have taken us a month or more before. This was not an incremental improvement but a sudden step function. The models and the harnesses around them crossed a threshold, and the entire unit economics of engineering changed.

I'm not the only one seeing this. Shopify's Tobi Lütke says he's "shipped more code in the last three weeks than the decade before." Etienne de Bruin, who coaches dozens of CTOs, reports a junior engineer shipping three production services in a single week. Harvey's Gabe Pereyra says the bottleneck in product development has flipped from implementation to review and prioritisation.

Here I want to share six lessons how AI has already changed product and engineering teams, and what I believe is required to build organisations that can be competitive today, based on our experience rebuilding engineering at Forgent AI around autonomous agents.

I. The Capability Threshold

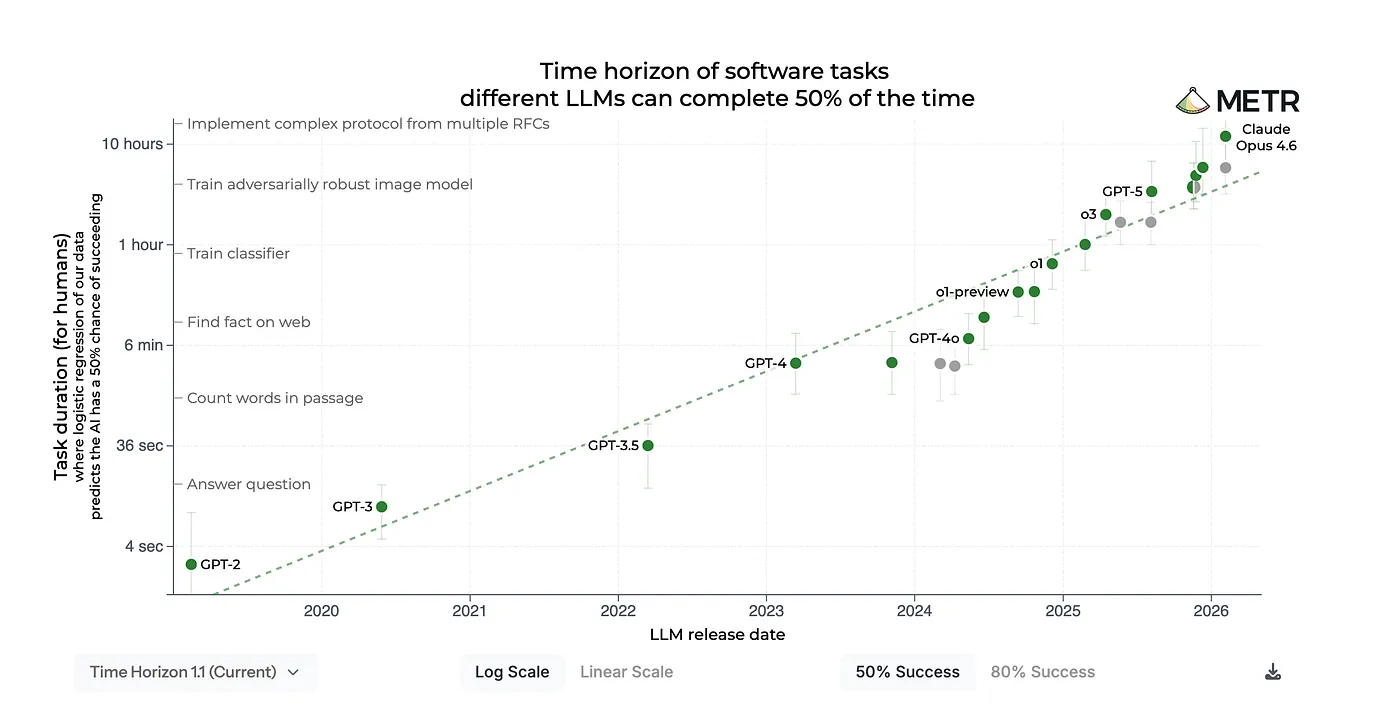

Around January 2026, coding agents crossed a coherence threshold. The models didn't just get faster at autocomplete. They became capable of sustained, multi step reasoning: planning an implementation, executing it across files, running tests, diagnosing failures, and iterating autonomously until tests pass. This is an exponential trend visible in the METR benchmarks and others.

Two breakthroughs drove this. Reinforcement learning from verifiable rewards (RLVR), training models against machine checkable outcomes like passing tests, dramatically improved code quality and self correction. Inference time compute scaling gave models the ability to "think harder" on complex problems. On top of this, longer context windows, robust tool use, and harness improvements enabled multi turn coherence across dozens of steps.

Karpathy described this as moving from "vibe coding" to "agentic engineering," where engineers orchestrate autonomous agents rather than writing code directly. Harvey's Gabe Pereyra captures the speed of this shift: his parents, both Stanford educated computer scientists, one at Apple, the other a Stanford professor, were totally blindsided by the capability jump. If domain experts didn't see it coming, most industries haven't even begun to process what this means.

The task-completion time horizon is the task duration (measured by human expert completion time) at which an AI agent is predicted to succeed with a given level of reliability. For example, the 50%-time horizon is the duration at which an agent is predicted to succeed half the time. The graph below shows the 50%- and 80%-time horizons for frontier AI agents, calculated using their performance on over a hundred diverse software tasks. Note: Axes are log-linear, so the observable growth is exponential; Source: [METR benchmarks]

II. What We Learned Rebuilding Our Engineering Org Around Agents

Lesson 1: Verifiable goals are the unlock. The single biggest enabler of long running agents is giving them a measurable goal. When an agent can run tests, see failures, and iterate until they pass, you can hand off entire features. Shopify's Lütke pointed autoresearch at their templating engine and got 53% faster rendering from 93 automated commits overnight. Kent Beck recently argued that TDD is more relevant than ever precisely because agents need machine verifiable feedback loops. On the flip side, vague instructions produce vague results. UI/UX work with instructions like "create an intuitive UI" still doesn't produce good outcomes.

Lesson 2: The harness matters more than the model. An agent is only as effective as the harness around it: how context is assembled, how tools are exposed, and how feedback loops (tests, linters, evaluators) enable self verification. At Forgent, we invested heavily in structured rules (CLAUDE.md files, skill definitions), coding infrastructure (preview servers, automated tests, evals), and broad access to information across the business. LangChain reported improving their coding agent from 52.8% to 66.5% on TerminalBench by changing only the harness. But there's a corollary: without good practices, the velocity multiplier becomes a debt accelerator. The 2025 DORA report confirmed that AI amplifies whatever you already have. Teams with strong delivery practices get faster, while teams without them accumulate technical debt at unprecedented speed.

Lesson 3: Guardrails and QA matter more than speed alone. The flip side of 10x output is 10x more risk that quality drops. Stack Overflow's analysis found 75% more logic errors in AI created PRs compared to human written ones. Amazon experienced this in early 2026 when AI assisted code changes contributed to service failures. At Forgent we deploy agent swarms including BUGBOT and Claude Code Review for continuous code review, and treat token spend on review tooling as high ROI investment. Speed without quality and reliability is worthless.

Lesson 4: The bottleneck shifts to judgment and taste. When building becomes cheap, the most expensive mistake is building the wrong thing. Product sense, user research, and the discipline to say "no" became our highest leverage skills. Product discovery and human review are now the bottlenecks, activities that cannot yet be meaningfully automated. We're seeing the emergence of the product engineer: a role combining user empathy, product sense, and technical fluency. As AI reduces the depth required in each discipline, companies need allrounders who merge disciplines. Not everyone agrees. Anthropic's growth team is hiring more PMs instead. The right answer likely depends on team size and coordination overhead.

Lesson 5: The organisation must change, not just the tools. When agents handle execution, the remaining human work requires tight coordination. Siloed functions create handoff overhead that agents don't eliminate. At Forgent, project teams now consist of one to two engineers, one PM, and one designer, small enough to sit in one room and move quickly. In the medium term, I believe functions like product, design, and engineering will merge further. As Dorsey and Botha argue, organisations must move from using humans as information routers to building a company "world model" where AI handles coordination and humans contribute judgment and strategic thinking.

Lesson 6: Context switching, not compute, limits parallelism. Agents can run in parallel, but humans can't context switch infinitely. My effective limit today is two parallel agents working on independent features. Beyond that, review overhead exceeds time saved. The scarce resource is human attention. Engineers need to learn a new skill: switching from coding to supervising agents, knowing where AI fails, which code to review, and how to scale themselves as agent managers.

III. What This Means For Tech Teams

Invest in infrastructure, not just models. Build the harness layer into your CI/CD: structured rules, preview servers, automated evals, and quality guardrails. Every rule you encode and every feedback loop you automate makes every future agent run more reliable.

Automate context assembly. Agent effectiveness depends on having the right context at the right time. Integrate your tools (Slack, Notion, GitHub, Figma, your codebase) so agents can pull from all of them. Treat context curation as ongoing infrastructure work.

Restructure around small, integrated teams. Reduce coordination overhead by forming small units where a single person or tiny team owns the full lifecycle from discovery to shipping. Less handoff means less lost context.

Accept that the learning curve is real work. Restructuring teams, encoding institutional knowledge, and learning to work with agents is organisational change, not a tool swap. It takes time to build the muscle.

IV. The Most Important Part

Perhaps the most important lesson is this: the only certainty is change. In the 2010s, organisations could operate the same way for years. By 2026, the cycle for reassessing whether your ways of working remain fit for purpose has compressed to just a few months.

The recent release of Mythos shows we are far from the end of the line. Models and harnesses will keep improving, and self training (AI being used to improve itself) will likely accelerate the rate of change further. Early proof of concepts by MIT and others have shown this works, and I'm certain frontier model providers already leverage their own traces and models to improve their harnesses.

For any organisation that wants to stay at the competitive frontier of software development, constant reinvention is no longer a competitive advantage but the price of entry. The question is no longer whether to adapt. It's whether you can adapt fast enough.

Insights

Concept-Based Tenders in Architecture: TED Market Data and Strategies for VgV Procedures 2026

How to win a concept-based tender in architecture: 2025 TED data on VgV procedures, contract values, and success factors at a glance.

Felicitas von Rauch

Insights

Evaluation Matrix in the Tender: Systematically Decoding Award Criteria

How Bid Managers strategically read a tender evaluation matrix. Practical guide to award criteria, weighting, and concept structure.

Felicitas von Rauch

Insights

What is a Bid Manager? Tasks, Tools, and Career Paths in Daily Procurement

Which Bid Manager tasks shape daily procurement: Requirements profile, salary, career paths, and tools for public tenders in detail.

Felicitas von Rauch